Is this hell? Find out in this interview I did on the This is Hell! radio show out of Chicago. Click here to listen or download!

Author: John Patrick Leary

Keywords now shipping for 50% off from Haymarket Books!

You can now order Keywords direct from the publisher, here. Not only do you get a 50% discount through the beginning of 2019, but Amazon won’t have it for weeks. It makes a great holiday gift for the crabby neoliberal subject on your list!

And for those who have asked through the “suggest a keyword” link: “ecosystem” and “disruption” are in the book, but you won’t find them on here.

Keywords for the Age of Austerity 33: Safety Net

A reader recently suggested the term “safety net,” which as they pointed out, is a metaphor that characterizes our working lives as a life-threatening high-wire act. It’s appropriate, therefore, that the most popular image search result for “safety net” depicts a construction worker saved at the last minute from certain death. In the absolute best of circumstances, that is all the US welfare state offers.

The term is habitually used by well-meaning liberals as a synonym for “welfare state,” a term rendered taboo in the US by a generation of racist dog-whistles. If you ignore the metaphor’s implications of acrobatic danger, it has the advantage of sounding, well, safe, but without much specificity about benefits and inequality. On a podcast sponsored by the University of Chicago’s business school, one co-host, an economist, invoked the metaphor to point out the need for some kind of welfare protections in neoliberal globalized market economies:

And so, if you want to push for globalization, you need at the same time to think about some safety net to absorb some of the costs of globalization. If you want to go back, then you pay the cost of going back.

I think that, by and large, no one is proposing more globalization with better safety nets. You have people that say, more globalization, and people that say, no globalization, but the connection with the retraining, with the safety net, has been lost in many people’s view.

A bit later, he cautions against overdoing it, muddling the metaphor completely in an effort to invoke the old virtues of self-reliance and hard work: “I fear that redistribution by itself is not the solution, because, of course, you need some safety net, but you can’t have people that live under it all their lives.” We’re living under the safety net, now? It does sound safer, when you think about it, then doing cartwheels above it.

What we mean by “safety net” matters precisely because of how many specific mechanisms of wealth redistribution have been shredded in the last 30 years. Elements of the welfare state that we have been made to find unthinkably “generous” now–like state-subsidized housekeepers for the elderly, slashed by Ronald Reagan’s 1982 budget cuts–were still treated as common-sense provisions when the New York Times profiled a blind New York woman, Claire Levy, who feared losing the benefit that winter.

The vagueness of the term, and its implicit reference to measures of absolute, last resort–you can always cut a little more here, a little more there, “trim the fat” around the edges, as our politicians like to say–is part of its design.

The phrase first appeared in the news media in the Nixon administration, in reference to an international financial “safety net” to protect capitalist economies from shocks like the 1973 OPEC embargo. But it entered domestic policy in the Reagan administration, referring to protections for the “truly needy” that would be protected from budget cuts.

Newspaper reports from the early years of the Reagan presidency put the term in scare quotes, marking it as an invention of the president and his spokesmen. In an address to Congress in 1981, Reagan declared, “We will continue to fulfill the obligations that spring from our national conscience. Those who through no fault of their own must depend on the rest of us, the poverty-stricken, the disabled, the elderly, all those with true need, can rest assured that the social safety net of programs they depend on are exempt from any cuts.” But who qualified as “those with true need”? Claire Levy didn’t. Reagan’s Secretary of Health and human Services described this category as anyone who might starve otherwise, according to a New York Times report from February 1981. Ed Meese, breaking out his trusty dog whistle, called them “the deserving poor.”

To stick with the acrobatic metaphor, it’s useful to imagine the “safety net” as a shifting set of social security measures for working people, the unemployed, children, and the elderly–that is, most people–that just gets lower and lower to the ground. Pretty soon, there won’t be any room left under it.

New review in The Outline

Thanks to Rebecca Stoner for this wonderful first review of Keywords.

A brief excerpt:

By demonstrating how dramatically these words’ meanings have transformed, Leary suggests that they might change further, that the definitions put in place by the ruling class aren’t permanent or beyond dispute. As he explores what our language has looked like, and the ugliness now embedded in it, Leary invites us to imagine what our language could emphasize, what values it might reflect. What if we fought “for free time, not ‘flexibility’; for free health care, not ‘wellness’; and for free universities, not the ‘marketplace of ideas”?

His book reminds us of the alternatives that persist behind these keywords: our managers may call us as “human capital,” but we are also workers. We are also people. “Language is not merely a passive reflection of things as they are,” Leary writes. “[It is] also a tool for imagining and making things as they could be.”

Download your own keywords successories!

Download these posters with inspirational quotations from Keywords: The New Language of Capitalism for your home or workplace! And don’t forget to buy the book!

On the rise of the “Innovation Center,” in the Chronicle of Higher Ed

I have a short piece in the Chronic on the proliferation of “innovation centers,” “makerspaces,” “hubs,” etc. in American universities. My argument, in short, is that academic institutions and the administrators who run them are, generally speaking, not innovative, and that innovation centers function mostly as vanity projects and political missions for wealthy donors.

At Wayne State University, a commuter campus in Detroit where faculty members and students struggle to turn people out for events, the opening of the Innovation Hub last fall was a big deal. I have rarely seen so many people in one room on campus. Speakers gushed about the university’s “innovation ecosystem” and the “disruptive” start-ups sure to blossom in its “incubators.”

Speakers paced the stage giving TED-style speeches rich in the soothing platitudes of business books. To nurture innovation, explained one, “you’ve got to have serendipity and creativity, and that’s when two plus two equals seven — apologies to the math department,” he added, chuckling at his own baffling joke. “You’ve heard the word ‘innovation’ a lot so far this evening,” said another, apologetically, briefly giving me hope that the term would finally be defined, or better yet, discarded. He continued: “You’re about to hear it a lot more.”

Read the rest of it here.

Happy Imperialist #Entrepreneurship Day from the Keywords #Team! [Updated!]

Every Columbus Day, it’s popular to draw fatuous links between the contemporary cult of entrepreneurship and the legacy of Christopher Columbus’ conquest—err, startup—of America some 500 years ago. After all, who better to take life and leadership lessons from than a famously venal and cruel 15th-century sea captain whose own men overthrew him?

Life Lessons from Christopher Columbus via @LinkedIn #ColumbusDay #entrepreneur #psychology http://t.co/wChvKgwfje pic.twitter.com/cGDczQ0Nzu

— Penina Rybak (@PopGoesPenina) October 11, 2015

Wikipedia attributes this widespread bland affirmation, often misattributed to Columbus, to Andre Gide, from The Counterfeiters (1925).

Today in 1492, Christopher Columbus left Palos, Spain with three ships. The voyage led him to what is now known as the Americas #innovation

— andrew corn (@acornnyc) August 3, 2015

Columbus was the “entrepeneur’s entrepreneur,” whatever that means, says a blogger for VentureBeat. A piece in the Harvard Business Review, always a reliable source for insipid business mythologies, argues that Columbus’s colonization of the Caribbean made him the original disruptive innovator. The author, a business professor named Patrick Murphy, sensitively concedes that “those colonial activities, to be sure, turned wicked.” To be sure. Another common move is to call Spain’s King Ferdinand and Queen Isabella the first “venture capitalists.” Others call Queen Isabella Columbus’ “angel investor.” Genocidal Christianity dies hard.

And there is this, on Columbus’ lessons in product “evangelism”:

Here’s what Columbus had to say to “the very high, very excellent, and puissant Princes, King and Queen of the Spains” on the subject of “evangelism.” In the Journal of the First Voyage of Columbus, the Genoese entrepreneur described the Taíno people of the modern-day Bahamas this way:

They should be good and intelligent servants, for I see that they say very quickly everything that is said to them; and I believe that they would become Christians very easily, for it seemed to me that they had no religion.

Today in Newsweek, Peter Roff laments “social justice warriors” who have sullied Columbus Day. In calling attention to Spanish colonialism, they have replaced the “serious study of history” with “grievance” and political correctness. As he writes:

Even the term “Columbus Day” has become a trigger for social justice warriors alarmed by the image of a pacific, indigenous people massacred by white Europeans seeking to build an empire and find riches far from home. It’s what happens when the demands of political correctness are permitted to overcome the serious study of history.

Regarding that image, though–“of indigenous people massacred by white Europeans seeking to build an empire and find riches far from home”–where is the actual lie?

Roff goes on: “Rather than consider Columbus Day as a time to disparage the consequences of his adventurism,” he writes–yes, enough with the massacre-disparaging!–“let’s rebrand the holiday as a celebration of the accomplishments made by immigrants to making the country what it is today and what it will be in the future.” Immigrant entrepreneurs, he says, like Columbus. “Think of the honorable men and women who have added to this nation’s economic, cultural, scientific, political, diplomatic, artistic and commercial achievements.” Consider Liz Claiborne, Albert Einstein, and Martin Luther King Jr., writes Roff, serious student of history. Martin Luther King, Jr., the immigrant entrepreneur.

In response to efforts last year in Seattle to dump Columbus Day in favor of a holiday honoring America’s indigenous people, Randy Aliment of the local Italian-American Chamber of Commerce told the Post-Intelligencer:

Christopher Columbus was this country’s first and bravest entrepreneur. He had a noble vision, gathered a team, and had the initiative to solicit funds for his high risk startup from the king and queen of Spain.

…to which I can only echo Twitter user Vlad here:

@seattlish @alexjon “Christopher Columbus was this country’s first and bravest entrepreneur.” OMFG WAT

— Vlad Verano (@3rdplacepress)

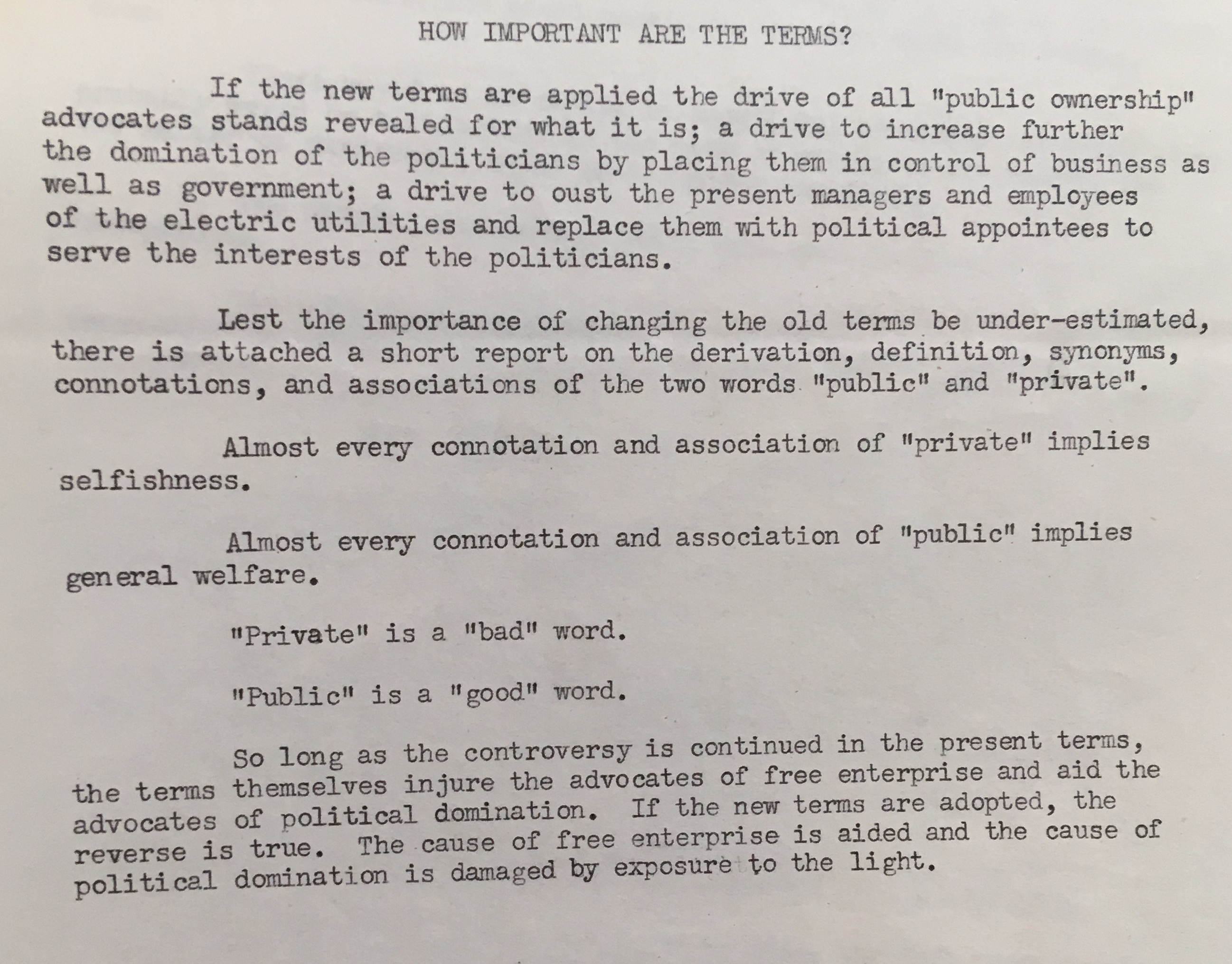

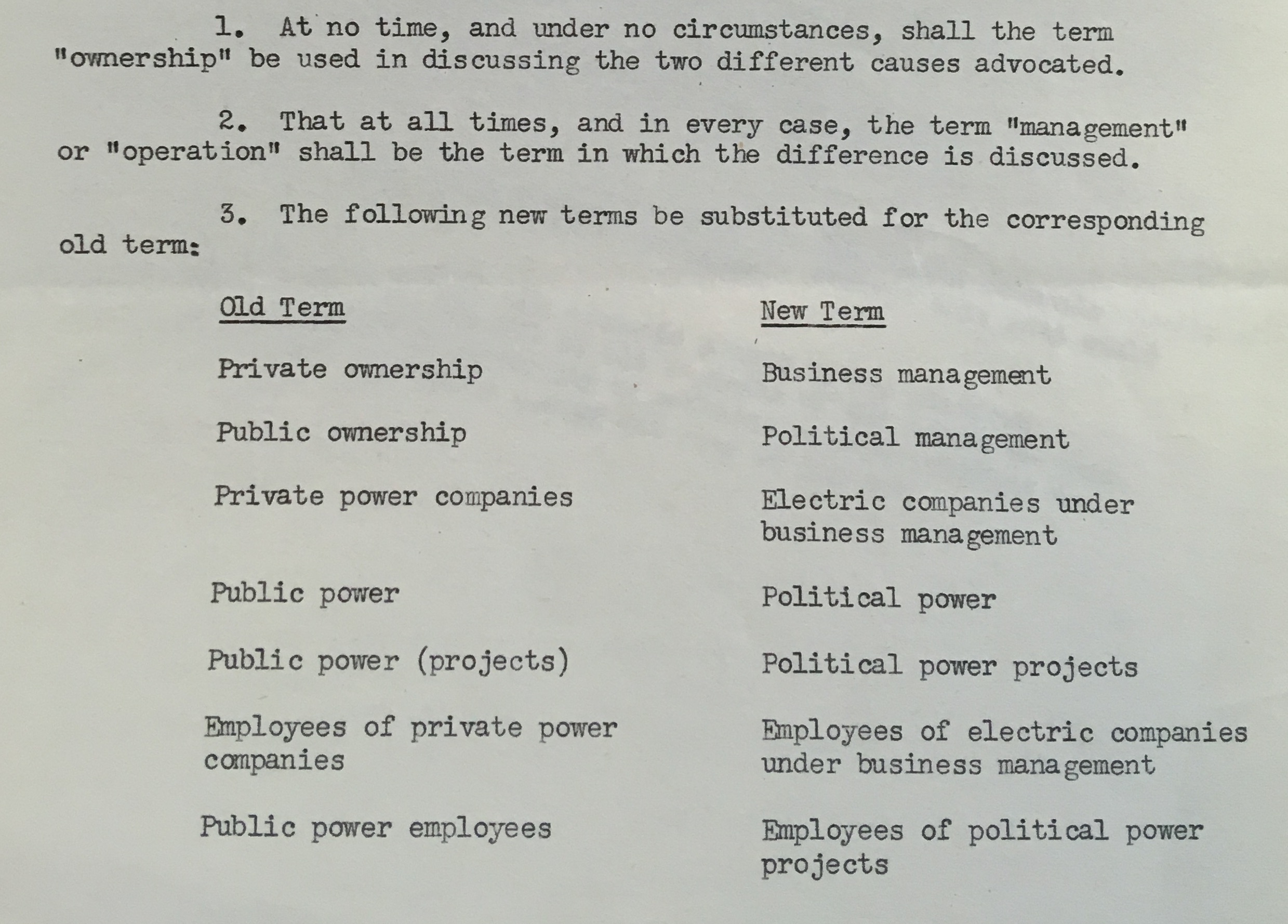

Public, Private, Management

In the archives of the National Association of Manufacturers, I found an interesting memo from the organization’s public relations department. The NAM got its start in the depression of the 1890s, as a confederation of industrialists fighting labor militancy, and in the 1930s became a leading enemy of the New Deal. During World War II, the NAM looked forward to the end of war production and the resumption of the battle against labor and public ownership.

A big part of that battle was in the mass media: the group devoted a lot of resources programming on radio, television, and in print journalism. Here is a document the group’s communications pros prepared on semantics in late 1944. The paper, which had to do with a campaign for privatization of electrical utilities, is revealing as a snapshot of the ideological tenor of the New Deal era (which the NAM people were surely paranoiacally overstating, of course).

“‘Private’ is a ‘bad’ word.”

“‘Public’ is a ‘good’ word.”

It’s hard not to think that the NAM has, for now, succeeded in reversing these terms, making “public” the bad word. And even if their substitutes for “private ownership” and “public ownership” are quite clunky, “management” has proven quite successful.

Raymond Williams notes in Keywords how “management” came in the postwar world to describe a “body of paid agents to administer increasingly large business concerns.” Management gradually replaced “the bureaucracy” (which referred to bad, private concerns) and “the administration” (which referred to public ones). “Management” united private and public, or rather, replaced the latter with the former. There is no “public”; there are only managers and customers.

The More Innovation Changes, The More It Stays the Same

Reading for “innovation” in the history of management and self-help literature reminds me of a line from the beginning of Ralph Ellison’s Invisible Man: “Beware of those who speak of the spiral of history; they are preparing a boomerang. Keep a steel helmet handy.”

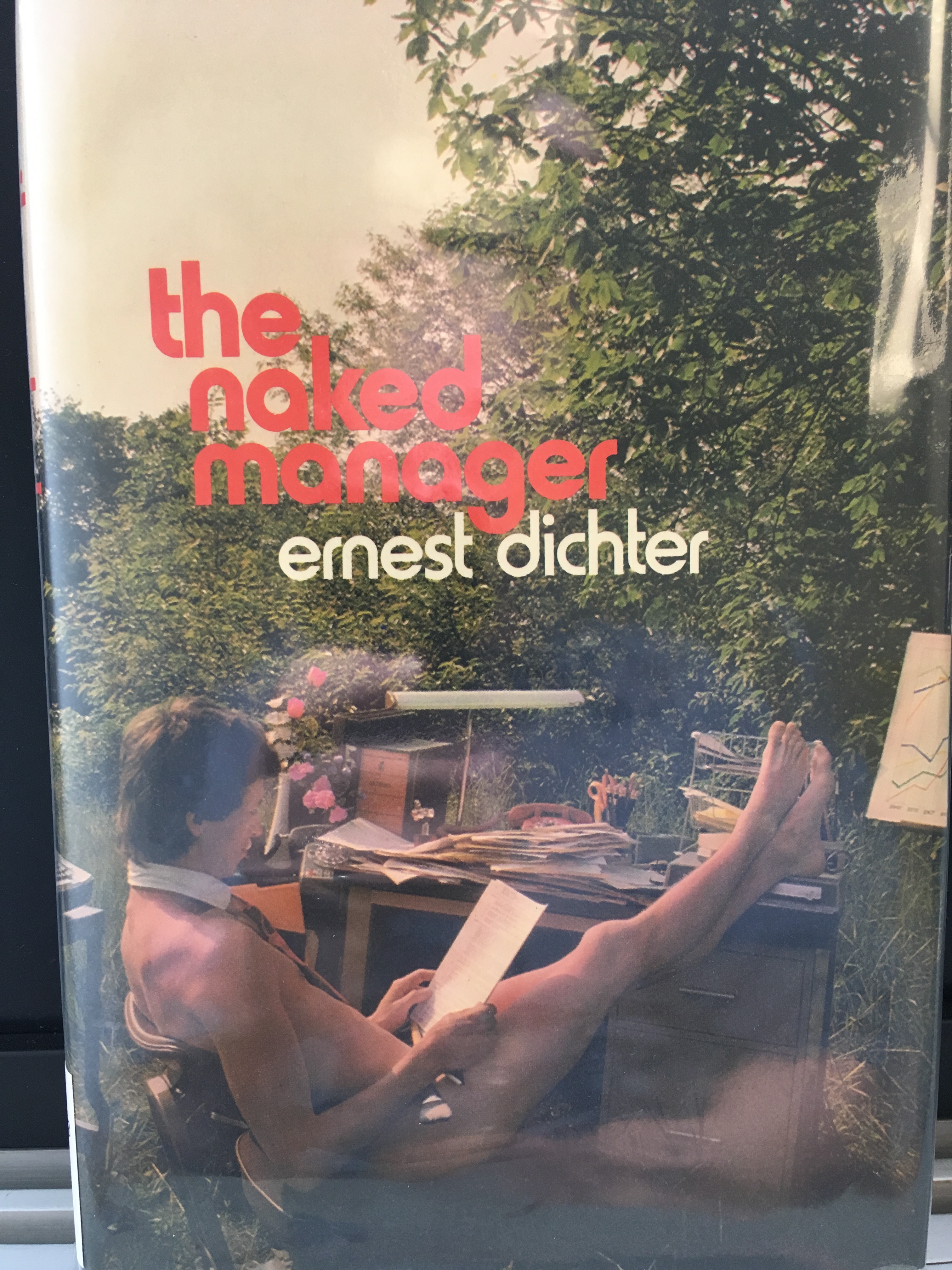

A spiral of history can be understood as a historical movement marked by reverses, repetitions, and changes in direction, never following a ideal straight line–Lenin meant it this way. The post-war theorists of innovation also spoke about history’s spiral, believing as they did in progress but insisting as they had to on the importance of the entrepreneur to achieve it. The entrepreneur was the hero who could set the spiral moving back in the right direction. Peter Drucker, the eminent management theorist, wrote in 1966: “history, it has often been observed, moves in a spiral; one returns to the preceding position, or to the preceding problem, but on a higher level, and on a corkscrew path.” Ernest Dichter, a 1960s branding pioneer and management “guru” (as they say) himself, was fond of the spiral metaphor too. And “innovation spirals” remain a popular subject on consulting and business advice websites today.

Another way to put it: those who write and think about innovation and entrepreneurship have been saying variations on the same ideas for a long time. If there’s an innovation spiral, this is it. It’s the basic irony of “innovation,” in fact: very little that is really new has been said about it for decades.

Which brings me to this this story in the New York Times, about automation in the hotel industry.

Maria Mendiola, a concierge at the San Jose Marriott, frets that Amazon’s agreement to deploy its Echo device in hotel rooms across Marriott’s properties will eventually make her position pointless. “Alexa might do my job in the future,” she said.

At the Sheraton Waikiki, next to the Royal Hawaiian, cashiers at the beachside lounge worry about a newly deployed computer system that will allow servers to close out their own checks — making cashiers redundant. There are automatic dishwashers on the market; machines to flip burgers and mix cocktails; robots to deliver room service or help guests book a restaurant reservation…

How many jobs will technology take out? Hoteliers have yet to figure out how guests will react to a more tech-heavy experience. A Marriott spokeswoman said in a statement that the chain was not deploying technology to eliminate jobs but was “personalizing the guest experience and enhancing the stay.”

The last line is key: a touchscreen and a robotic bartender, will personalize the guest experience. Clearly when Marriott says “personalized,” they mean “customized,” but the forehead-slapping absurdity of the slip reminded me of an argument that often gets made about “innovation” as a feature of the modern economy. As Lily Irani has recently argued, so-called “design thinking” emerged in northern California in the mid-2000s as a response to outsourcing. IDEO, the design firm famous for designing the Apple mouse, mostly abandoned product design in favor of systems and organizational design—management consulting, in other words. As the product design end of the business faced increased competition from Chinese firms, Irani explains, IDEO recommitted itself to the ostensibly un-outsourcable management skills of empathy and creativity.

This is a popular and rather old idea, though each generation presents it as new: make yourself safe from outsourcing or automation by mastering cognitive skills. Nearly every English department in America has, at some point, shared on its website or Facebook page that Steve Jobs quote about how the liberal arts makes his heart sing or whatever. And this is another old irony of innovation discourse: the idea that the market rewards “soft” skills like creativity and intuition tends to coincide with the actual rationing of humanistic education. We complain about this now, a lot, though many of us in university education tend to imagine the early 1960s as the salad days of the humanities. John W. Gardner lamented the decline of the humanities in his otherwise optimistic 1963 best-seller Self-Renewal: The Individual and the Innovative Society; William Whyte did the same in The Organization, a more critical account of U.S. business culture. Drucker said around the same time that poetry was the most practical subject for a businessman to study, but few did anymore.

And Drucker wrote a lot about the issue vexing Marriot’s workers: automation, or as he wrote it, reverently, Automation. He defended it as the unbinding of workers from the Fordist machine, a way to “to title the special properties of the human being.” As in “design thinking” now, the claim here is that automated processes unleash creative capacities that are more fully, deeply human—what cannot be automated or outsourced.

Most things, though, can be outsourced or automated if someone’s boss wants to do it and can. Hotel workers are not doing the sort of prestige jobs that Stanford d.school students dream about, but they are unquestionably doing jobs dependent on human interaction. Bartending, in fact, is the very definition of a job requiring empathy. What the hotel workers (and most underpaid or unemployed English professors, for that matter) could probably tell you, of course, is that the only point of automation, under this economic system, is simply to extract the most value from the fewest workers possible–just as it has always been. The more things change, etc.

Watch out for that boomerang!

New article: “Innovation and the Neoliberal Idioms of Development”

I have a new academic-ish article up at boundary 2 online in a special issue edited by David Golumbia on the “Digital Turn.” It’s on “social innovation” and the way that it circulates in the so-called “Third World” and in humanitarian and development agencies. It picks up on the argument of my first book, which explored the importance of “underdevelopment” in the evolution of U.S. nationalism, by tracing development’s successor concept: innovation.

Here’s a teaser:

As an ideology, innovation is driven by a powerful belief, not only in technology and its benevolence, but in a vision of the innovator: the autonomous visionary whose creativity allows him to anticipate and shape capitalist markets.

Given the immodesty of the innovator archetype, it may seem odd that innovation ideology could be considered pessimistic. On its own terms, of course, it is not; but when measured against the utopian ambitions and rhetoric of many “social innovators” and technology evangelists, their actual prescriptions appear comparatively paltry. Human creativity is boundless, and everyone can be an innovator, says Yunus; this is the good news. The bad news, unfortunately, is that not everyone can have indoor plumbing or public lighting.

Read the whole thing here.